Synthetic Data:

The Invisible Fuel

Powering the

AI Revolution

Artificially generated data is no longer a niche workaround. It has become a foundational pillar of modern machine learning — reshaping healthcare, finance, autonomous vehicles, and the very models that produce it. Here is everything you need to know.

Summary

Synthetic data, artificially generated information that statistically mirrors real-world datasets has emerged as one of the most strategically significant technologies in modern AI development. This article examines its definition, generation techniques, industrial applications, documented risks, and regulatory landscape, drawing on research and market data from 2024 to 2026. The central argument is that synthetic data is not a replacement for reality, but a disciplined amplifier of it one that demands rigorous governance to deliver its promise without introducing new and systemic harms.

Section I — What It Is

Defining Synthetic Data: From Workaround to Strategic Asset

Every powerful technology has an origin story that begins with a problem no one wanted to solve the hard way. Synthetic data is no different. Initially conceived as a method to protect user privacy, a way to train systems on data that looked like credit card numbers or phone records without ever touching the real ones, it has since evolved into something qualitatively more significant. Today, synthetic data is artificially generated information that mimics real-world data, used not merely to protect privacy but to fill data gaps, simulate rare events, test new scenarios, and scale AI training pipelines to previously impossible dimensions.[1]

The World Economic Forum’s Global Future Council on Data Frontiers, in its September 2025 briefing paper Synthetic Data: The New Data Frontier, drew a sharp line between the old and the new: “Synthetic data is no longer just a tool of necessity, it’s a driver of innovation.”[2] Entire urban environments can now be replicated for autonomous vehicle testing. Media companies generate massive synthetic training datasets for recommendation systems. Healthcare researchers test treatment plans at scale using synthetic patient records without ever approaching a real medical file.[2]

The formal definition is deceptively simple: synthetic data is artificially generated information that statistically preserves the properties, distributions, and relationships of real-world data. A synthetic healthcare record will have the right demographic distributions, correlating comorbidities, and realistic drug prescriptions, without representing any actual patient. A synthetic financial transaction dataset will exhibit the right fraud signatures, seasonal patterns, and account behaviours, without exposing a single real customer. The art is in the generation; the challenge is in the validation.

$1.88BAI-generated synthetic tabular dataset market, 2025[3]

37.9%Compound annual growth rate, 2024–2025[3]

$6.73BProjected market size by 2029[3]

<5%Accuracy degradation of leading methods vs. real data[4]

Section II — How It’s Made

The Generation Toolkit: GANs, Diffusion Models, and Beyond

Understanding synthetic data requires understanding how it is made — and the technical arsenal has expanded dramatically. The three primary architectural families currently dominate the field, each with distinct strengths and failure modes.

Generative Adversarial Networks (GANs)

Two neural networks a generator and a discriminator compete in an adversarial process until the generator produces data indistinguishable from real examples. Long considered the gold standard for high-fidelity image synthesis, GANs excel at visual data but are prone to training instability and “mode collapse,” where the generator produces only a narrow range of outputs.[5]

Diffusion Models

The dominant paradigm in image generation as of 2025, diffusion models work by learning to reverse a process of adding noise gradually denoising random patterns into structured, realistic outputs. They offer superior diversity and stability compared to GANs but carry higher computational cost. PMC research confirms they now underpin most text-to-image and image-to-image systems.[6]

Variational Autoencoders (VAEs)

VAEs encode data into a latent probability space and then decode it back into synthetic outputs. They carry lower computational costs than GANs, are better suited to smaller datasets, and do not suffer from mode collapse. Particularly effective for generating structured medical records including image, numerical, and bio-signal data VAEs remain a core tool in healthcare synthetic data pipelines.[7]

LLM-Based Generation

Large language models like GPT-4, Llama, and DeepSeek are increasingly used as “draft machines” for synthetic text data generating instructions, dialogues, rationales, and tool traces constrained by domain rules and templates. The process is not unconstrained hallucination: models are guided by prompts, filtered by quality checks, and validated before entering training pipelines.[8]

The practical workflow, as described by AI practitioners writing in late 2025, typically involves layering these approaches. A team might use an LLM to generate 1,000 variants of a discharge instruction or logistics exception, then apply machine learning scoring, clustering, and diversity checks to remove near-duplicate patterns before the data enters a training pipeline.[8] The goal throughout is control, not abundance: synthetic data is most valuable when it is specific targeting long-tail events, domain-specific gaps, and multimodal workflow combinations that real data cannot provide efficiently.[8]

“The competitive edge won’t come from who has the shiniest frontier model license it will come from who runs the smartest flywheels: curated human corpora, disciplined synthetic data generation, and relentless validation on messy real-world data.” InvisibleTech AI, December 2025

Section III — Industrial Applications

Where Synthetic Data Is Already Changing the World

The industries being transformed by synthetic data are not hypothetical. The applications are live, documented, and in several cases mission-critical.

Healthcare

Patient Privacy Without Research Paralysis

Synthetic patient data enables researchers to test treatment plans at scale, train diagnostic AI on synthetic medical imaging, and model drug interactions without exposing protected health information. Published in Frontiers in Digital Health (February 2025), research on rare disease confirms that VAE-GAN hybrid models now generate high-quality patient records that bridge data gaps without compromising confidentiality.[7] The European Health Data Space is emerging as the regulatory framework for this work across the EU.[7]

Finance

Fraud Detection Without Fraud Exposure

Financial institutions use synthetic transaction data to train fraud detection systems, test risk management models, and optimise portfolios particularly when access to real-world financial data is limited or raises concerns for financial agencies. Research published via arXiv in 2023 and cited across 2025 literature comprehensively catalogues these applications in risk assessment, portfolio optimisation, and algorithmic trading.[9]

Autonomous Vehicles

Safety-Critical Scenarios at Scale

Training self-driving cars requires exposure to rare, high-risk scenarios the kind that are prohibitively dangerous and expensive to stage in the real world. Companies like Waymo now replicate entire urban environments synthetically. A ScienceDirect December 2025 review of generative models for transportation systems documents GAN and diffusion model applications across trajectory generation, traffic flow prediction, and sensor data simulation for autonomous driving systems.[10]

Large Language Models

Solving the Training Data Scarcity Crisis

The data landscape for AI training has become dramatically more restrictive. News media and Reddit now protect their intellectual property and sue AI labs for non-compliance. Cloudflare introduced pay-per-crawl for 37.4 million hosted websites as of August 2025. In this environment, synthetic data has become an affordable, scalable solution to the “cold start problem” that engineers face when real data is locked, licensed, or legally inaccessible.[11]

Retail & Marketing

Personalisation Without Privacy Risk

Synthetic customer data helps companies model purchasing behaviours, predict trends, and develop personalised recommendation systems without compromising real customer information. The same approach allows retailers to stress-test loyalty programs, pricing models, and supply chain scenarios using artificially generated consumer populations with statistically realistic characteristics.[12]

Rare Disease Research

Bridging the Data Desert

Rare disease research faces a structural crisis: small, heterogeneous patient populations make clinical trial design and AI-assisted diagnosis severely data-limited. Synthetic data generation, including Conditional VAEs designed specifically for small datasets, are allowing researchers to generate diverse and representative patient records — enabling global research collaboration without the legal and ethical barriers of sharing real patient data across borders.[7]

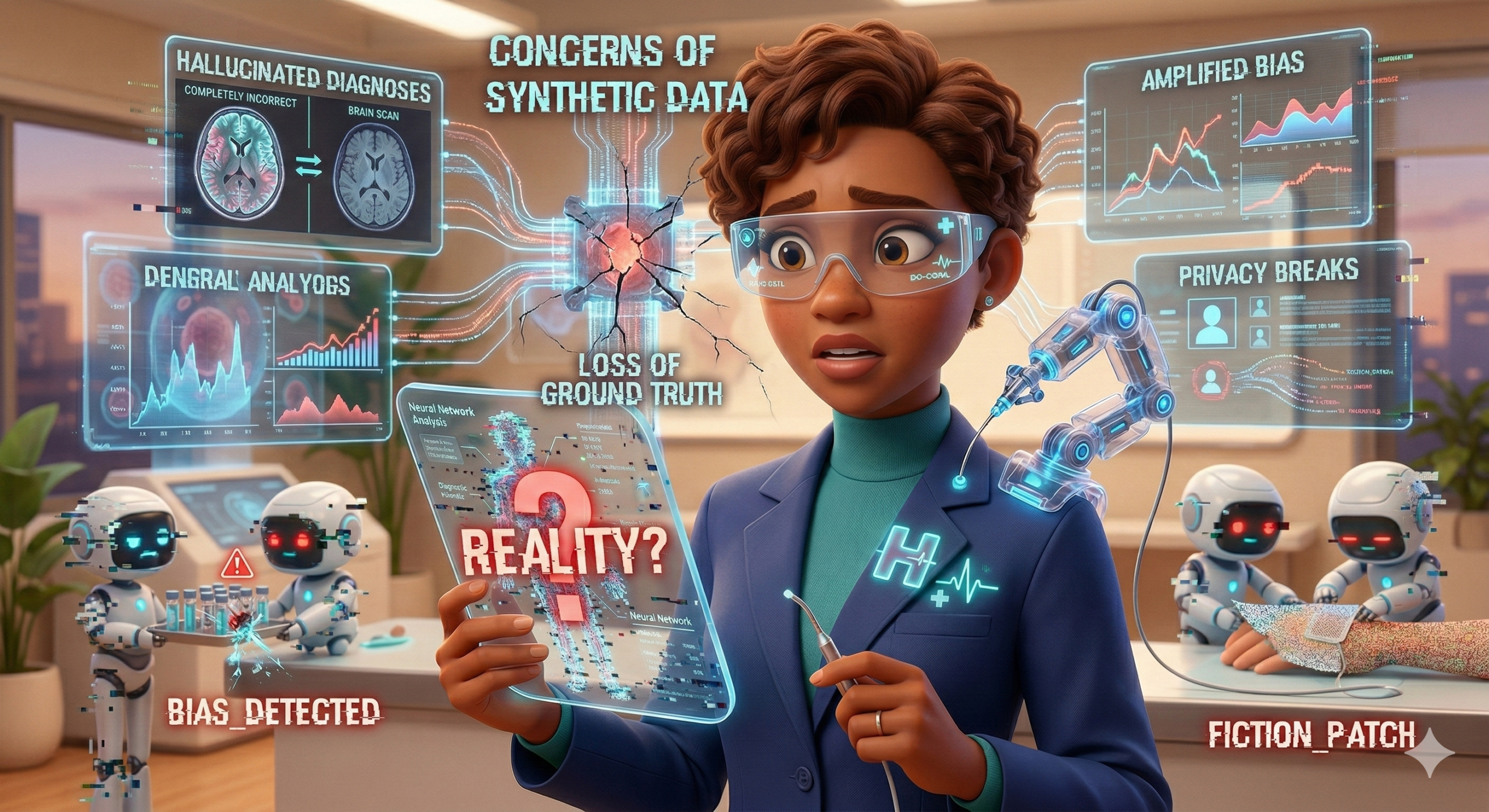

Section IV — The Hidden Risks

The Jekyll and Hyde Problem: When Synthetic Data Goes Wrong

Every powerful tool carries a shadow, and synthetic data is no exception. The research community has identified a cluster of risks that, taken together, constitute what the World Economic Forum calls “significant and systemic threats” that are “magnified by the difficulty of distinguishing between AI-generated and real-world data.”[2] The most alarming of these is a phenomenon researchers have named model collapse.

Model Collapse: The Recursive Trap

Model collapse occurs when AI systems are iteratively trained on their own synthetic outputs, causing progressive quality degradation that follows a two-stage pattern. First, the model loses the long-tail detail of the original human data distribution rare events, edge cases, and unusual patterns are smoothed out. Then, distinct modes blur together until outputs no longer resemble the real data they were meant to replicate.[13] Researchers have named the worst outcome “Model Autophagy Disorder” (MAD): models that recursively train on their own outputs inevitably lose either quality or diversity unless each training round incorporates sufficient fresh, real data.[13]

A telling analogy from the research: A model trained on the boiling points of known elements may eventually be asked about “an element with atomic number 500.” There is no such element, but the model will extrapolate anyway. If retrained on those outputs, it builds a periodic table of imaginary matter that no longer maps to real chemistry. Catastrophic forgetting follows the model loses previously learned capabilities and data poisoning compounds the problem as the model begins preferring its own synthetic approximations over accurate real-world examples.[11]

The solution, current research agrees, is surprisingly accessible. Adding as little as 10% of authentic human-generated data to an otherwise synthetic dataset significantly improves model confidence and accuracy.[11] The endgame for practitioners is not “all synthetic, no humans” it is a hybrid pipeline in which synthetic data expands and stress-tests a core of carefully curated real data, while human-in-the-loop validation keeps the system grounded.[8]

Privacy Leakage: The Residual Risk

A second and less-discussed risk is that synthetic data does not always fully anonymise its source. When outliers or unique identifiers are not properly handled during generation, statistical traces of real individuals can remain in synthetic datasets, making it possible to reverse-engineer real records from synthetic ones reintroducing the very privacy risks that synthetic data was meant to eliminate.[14] A November 2025 regulatory review published in npj Digital Medicine confirmed that ethical concerns around residual privacy vulnerability and insufficient oversight remain primary concerns in healthcare synthetic data governance.[15]

Bias Amplification and “Data Laundering”

Synthetic data generated from biased real-world datasets inherits and can amplify those biases. If the source data underrepresents certain demographics, the synthetic version will do the same at scale. More troubling still is a practice critics have called “data laundering”: using synthetic data to obscure the provenance of training data, potentially circumventing consent frameworks and copyright protections that real data would require.[16] As the WEF observed, deepfakes and AI-generated voice cloning represent the most publicly visible manifestation of this risk: when people can no longer trust what they see or hear, “the consequences ripple far beyond technical systems.”[2]

The regulatory response is accelerating. The EU AI Act now requires organisations to explore synthetic data alternatives before processing personal data, making synthetic data governance a compliance obligation rather than a best practice. GDPR fines totalling over $2.77 billion between 2018 and 2023 have already made privacy-preserving technologies essential for European enterprise operations. Nineteen US state privacy laws came into effect by 2025.[4]

Section V — Validation and Governance

Measuring What Cannot Be Seen: The Quality Framework Problem

One of the most practically important and least publicly discussed challenges in synthetic data is validation. How does an organisation know whether its synthetic dataset is actually good? The question is more complex than it appears, because “good” has at least three separate dimensions that can conflict with one another.

Emerging industry frameworks conceptualise synthetic data quality across three axes. Fidelity how statistically close the synthetic data is to the real-world source. Utility how effectively models trained on synthetic data perform on real-world tasks. And Privacy how robustly the synthetic data prevents re-identification of the real individuals in the source dataset.[4] Maximising all three simultaneously is structurally difficult: techniques that improve fidelity often reduce privacy, while techniques that maximise privacy can degrade utility.

Academic research presented at KDD 2025 (the ACM SIGKDD Conference on Knowledge Discovery and Data Mining, held in Toronto) explored the generation and evaluation of synthetic survey data, highlighting that standard quantitative metrics often fail to capture scientific or domain-specific relevance underscoring the need for expert-in-the-loop validation protocols that go beyond automated scoring pipelines.[17] A BMC Medical Informatics paper published in 2025, SynthRo, presented a dedicated dashboard for evaluating and benchmarking synthetic tabular data in healthcare, representing early progress toward standardised quality assessment.[18]

A March 2025 academic consensus, published via arXiv, emphasised the need for stronger privacy metrics particularly around identity and attribute disclosure noting that current industry measures often fall short of what regulators and researchers require.[19] The governance gap is real: the Lancet Digital Health has called for urgent development of synthetic data privacy frameworks for medical research, and ISO/IEC AWI TR 42103 is in active development to provide international standardisation.[15]

“Realising the benefits of synthetic data while mitigating known risks is a shared responsibility between engineers, policy advisors, executives, and users working collaboratively and proactively.” World Economic Forum, Global Future Council on Data Frontiers, October 2025

Section VI — Strategic Outlook

The Hybrid Future: Where Synthetic Data Fits in the AI Stack

The strategic consensus that has emerged across the research community and practitioner literature is clear and consistent: the future of AI training data is hybrid. The question is no longer whether to use synthetic data, but how to integrate it responsibly into pipelines that remain grounded in human truth.

MIT researcher Kalyan Veeramachaneni, speaking to MIT News in September 2025, summarised the fundamental principle: synthetic data works best as a complement to real-world data, not a replacement for it.[20] IBM’s November 2025 analysis of the promises, risks, and realities of synthetic data reached the same conclusion from an enterprise perspective: “synthetic data should complement real-world data, not replace it” and that creating high-quality synthetic data “requires thoughtful design, careful validation and ongoing monitoring.”[14]

The competitive landscape for synthetic data tooling is maturing rapidly. Enterprise platforms from MOSTLY AI, Gretel, Hazy, Synthesis AI, and Sogeti (part of Capgemini) now offer privacy-preserving synthetic data generation across tabular, visual, and text modalities.[21] Open-source alternatives including Synthea (for healthcare) and the Synthetic Data Vault (SDV) ecosystem (for tabular, relational, and time-series data) are making enterprise-grade capabilities accessible to research teams and smaller organisations.[21]

Perhaps the most telling signal of synthetic data’s strategic maturity is the regulatory posture of major jurisdictions. Regulators are no longer merely tolerating synthetic data they are beginning to mandate its exploration. When the EU AI Act requires organisations to test synthetic alternatives before processing personal data, the technology moves from the experimental budget to the compliance budget. And compliance budgets, as every enterprise knows, are where technologies achieve durability.

The Bottom Line

Synthetic data is neither a magic solution nor a dangerous distraction. It is a disciplined engineering approach to a genuinely hard problem: AI systems need more data, better data, and more diverse data than the real world can safely or affordably provide.

Used with rigour anchored in real human data, validated across fidelity, utility, and privacy dimensions, and governed by frameworks that evolve with the technology synthetic data is one of the most powerful tools available to the AI development community. Used carelessly, it risks hollowing out the very systems it is meant to improve.

The organisations that will lead the next decade of AI are those that learn to tell the difference.

All sources reflect published research, industry reports, and peer-reviewed literature from 2023–2026. URLs verified March 2026.

- World Economic Forum.Artificial Intelligence and the Growth of Synthetic Data.October 2025.weforum.org

- World Economic Forum, Global Future Council on Data Frontiers.Synthetic Data: The New Data Frontier.Briefing Paper, September 2025.reports.weforum.org

- Research and Markets / Globe Newswire.AI-Generated Synthetic Tabular Dataset Global Market Report 2025.January 29, 2026.globenewswire.com

- Articsledge.What is Synthetic Data? Complete 2026 Guide to AI-Generated Data.March 2026.articsledge.com

- arXiv.Generative AI for Autonomous Driving: A Review.December 2, 2025.arxiv.org; PMC.Synthetic Scientific Image Generation with VAE, GAN, and Diffusion Model Architectures.J Imaging, 2025.pmc.ncbi.nlm.nih.gov

- PMC.Synthetic data generation by diffusion models.pmc.ncbi.nlm.nih.gov

- Mendes JM, Barbar A, Refaie M.Synthetic data generation: a privacy-preserving approach to accelerate rare disease research.Frontiers in Digital Health, 7:1563991, February 25, 2025. doi:10.3389/fdgth.2025.1563991.frontiersin.org

- InvisibleTech AI.AI Training in 2026: Anchoring Synthetic Data in Human Truth.December 3, 2025.invisibletech.ai

- Potluru VK, et al.Synthetic data applications in finance.arXiv:2401.00081, 2023; as cited in arXiv.Escaping Model Collapse via Synthetic Data Verification.October 18, 2025.arxiv.org

- Lin H, et al.Generative models for the evolution of transportation systems.ScienceDirect, December 2025. doi:10.1016/j.ssaho;sciencedirect.com

- Xenoss.How to Use Synthetic Data in 2025: Benefits, Risks, Examples.August 28, 2025.xenoss.io

- Netguru.Synthetic Data: Revolutionizing Modern AI Development in 2025.September 9, 2025.netguru.com

- ManageEngine Insights.AI Model Collapse: The Synthetic Data Trap and How to Avoid It.December 17, 2025.insights.manageengine.com

- InformationWeek.The Real-World Benefits and Risks of Synthetic Data.November 13, 2025.informationweek.com

- PMC / npj Digital Medicine.Protecting patient privacy in tabular synthetic health data: a regulatory perspective.Published November 28, 2025. doi:10.1038/s41746-025-02112-0.pmc.ncbi.nlm.nih.gov

- WebProNews.Synthetic Data in AI: Scalability Benefits and Hidden Risks.August 26, 2025.webpronews.com

- Jiang Y, et al.Synthetic Survey Data Generation and Evaluation.ACM SIGKDD (KDD ’25), Toronto, August 2025. doi:10.1145/3690624.3709421.dl.acm.org

- Santangelo G, et al.How Good Is Your Synthetic Data? SynthRo, a Dashboard to Evaluate and Benchmark Synthetic Tabular Data.BMC Medical Informatics and Decision Making, 25(1):89, 2025.

- DSC Next Conference / arXiv consensus.Synthetic Data: The Future of Data Science in 2025.September 2025.dscnextconference.com

- Veeramachaneni K.3 Questions: The Pros and Cons of Synthetic Data in AI.MIT News, September 3, 2025.news.mit.edu

- IBM Think.Examining Synthetic Data: The Promise, Risks and Realities.November 18, 2025.ibm.com

- LinuxSecurity.Leading Synthetic Data Solutions for AI Development and Testing in 2025.September 21, 2025.linuxsecurity.com